With the increasing complexity of modeling frameworks and their relevant computational needs, organizations find it harder to meet the evolving needs of Machine Learning.

MLOps and DataOps help data scientists embrace collaborative practices between various technology, engineering, and operational paradigms.

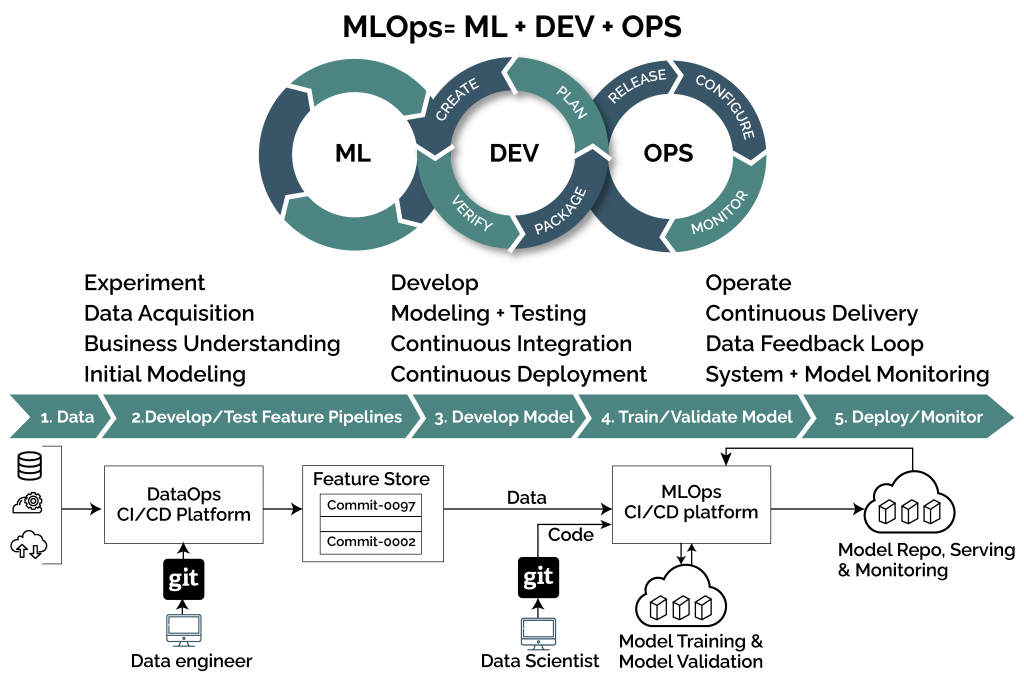

MLOps is a set of practices that infuses Machine Learning, DevOps, and Data Engineering practices for a reliable and data-centric approach to Machine Learning systems during production.

It starts by defining a business problem, a hypothesis about the value extraction from the collected data, and a business idea for its application.

What is MLOps, and what does it entail?

What is MLOps, and what does it entail?

It is a new cooperation method between business representatives, mathematicians, scientists, machine learning specialists, and IT engineers in creating artificial intelligence systems.

It applies a set of practices to augment quality, simplify the management processes, and automate Machine Learning and Deep Learning models’ deployment in a large-scale production environment.

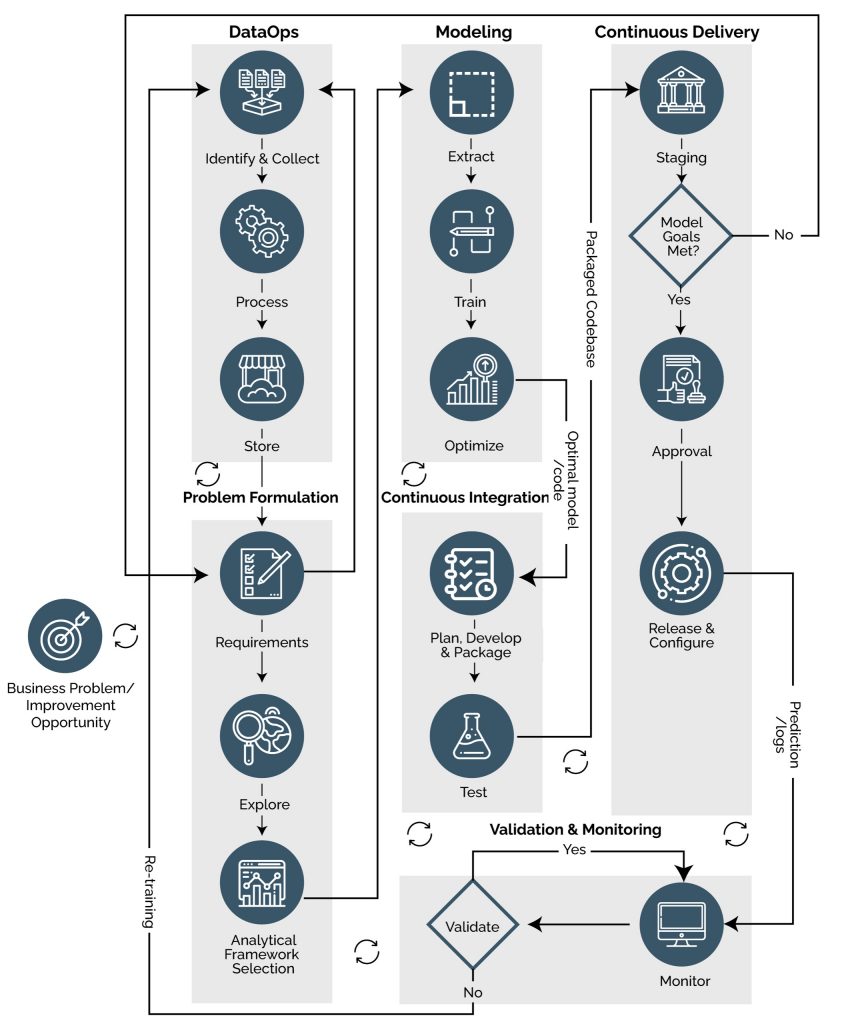

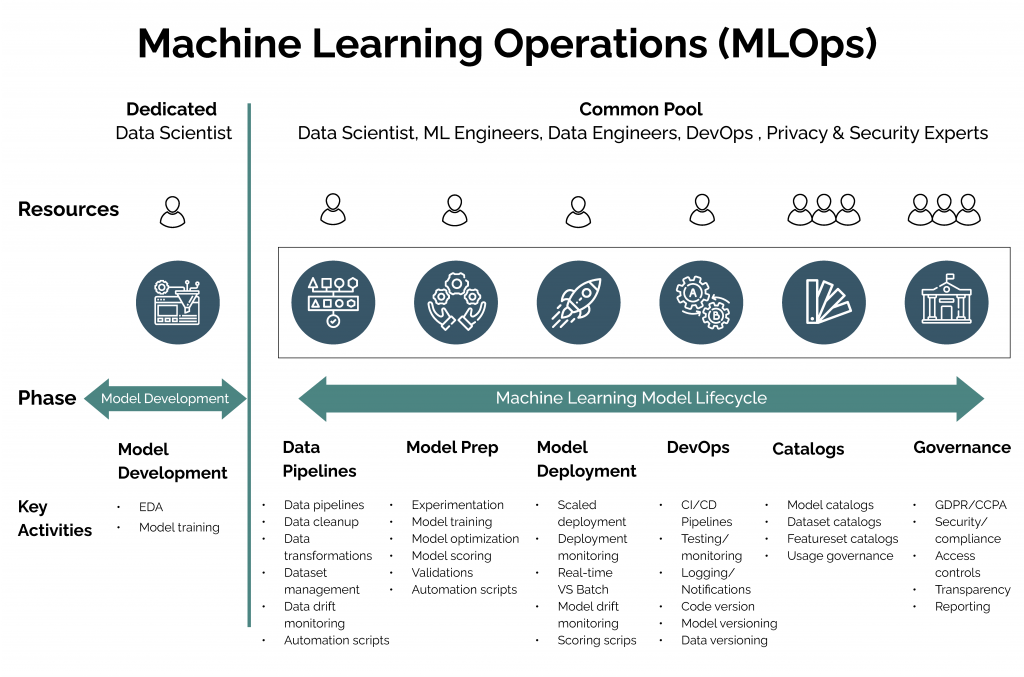

The processes involved in MLOps include data gathering, model creation (SDLC, continuous integration/continuous delivery), orchestration, deployment, diagnostics, governance, and business metrics.

Key Components of MLOps

MLOps Lifecycle

MLOps Lifecycle

When it becomes necessary to retrain the model on new data in the operation process, the cycle is restarted – the model is refined and tested while a new version is deployed.

Why do we need MLOps?

MLOps streamlines process collaboration and integration through the automation of retraining, testing, and deployment.

AI projects include stand-alone experiments, ML coding, training, testing, and deployment to fit into the CI\CD pipelines of the development lifecycle.

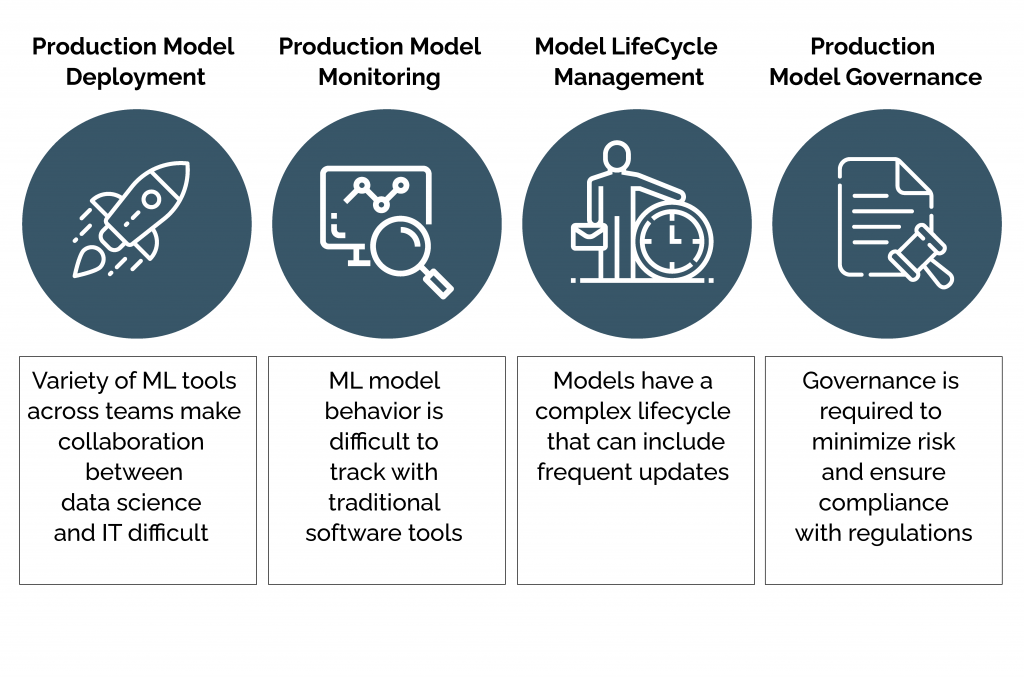

MLOps automates model development and deployment to enable faster go-to-market and lower operational costs. It allows managers and developers to become more agile and strategic with their decision-making while addressing the following challenges.

Unrealized Potential: Many firms are striving to include ML in their applications to solve complex business problems. However, for many, incorporating ML into production has proven even more difficult than finding expert data scientists, useful data, and optimized training models.

Even the most sophisticated software solutions get caught up in deployment and become unproductive.

Frustrating Lag: Machine learning engineers often need to deploy an already trained model, which could cause anxiety. Communication between the operations and engineering teams requires incremental sign-offs and facilitation, spanning over weeks or months. Sometimes, a straightforward upgrade can feel insurmountable, especially for model enhancements, by switching from one ML framework to another.

Fatigue: Production processes lead to frustrated or underutilized engineers whose projects may not make it to the finish line. This causes process fatigue that stifles creativity and diminishes the motivation to deliver benchmark customer experiences.

Weak Communication: Data engineers, data scientists, researchers, and software engineers seem to be worlds apart from the operations team regarding the physical presence and thought process.

There is rarely sufficient streamlining to bring development and data management work to full production.

Lack of Foresight: Many ML data scientists don’t have a particular way of knowing that their training models will work correctly in production. Even writing test cases and continually auditing their work with QA or Unit Testing cannot prevent the types of data encountered in production to differ from those used to train these models.

Therefore, gaining access to production telemetry to evaluate model performance against real-world data is very important.

However, the non-streamlined CI/CD processes to place the new model into production is a significant hindrance to realizing deriving value from machine learning.

Misused Talent: Data scientists differ from IT engineers. They primarily develop complex algorithms, neural network architectures, and data transformation mechanisms but do not handle Microservices deployment, network security, or other critical aspects of real-world implementations.

MLOps merges multiple disciplines with varied expertise to infuse machine learning into real applications and services.

DevOps vs. MLOps

By analogy, DevOps and DataOps help businesses organize continuous cooperation and interaction between all the process participants with machine learning models created by Data Scientists and ML developers.

Though MLOps evolved from DevOps, the two concepts are fundamentally different in the following ways.

| MLOps | DevOps |

| It is more experimental in nature. | It is more implementation and process-oriented. |

| It needs a hybrid team composition of software engineers, data scientists, ML researchers, etc. focusing on exploratory data analysis, model development, and experimentation. | Teams are mostly composed of data engineers, scientists, and developers. |

| Testing an ML system involves model validation and training, in addition to unit and integration testing. | Mostly regression and integration testing are done. |

| A multi-step pipeline is required to retrain and deploy a model automatically. | A single CI/CD pipeline is enough to retrain and deploy a DevOps model. |

| Automatic execution of steps needs manual intervention before new models are deployed. | No Manual intervention is required for new model deployment since the entire process can be automated using various tools. |

| ML models can experience production system performance degradation due to evolving data profiles. | DevOps models do not experience any performance degradation due to evolving elements. |

| Continuous Training (CT) is used to retrain candidate models for testing and serving automatically. And Continuous Monitoring (CM) is used to capture and analyze production inference data and model performance metrics. | Continuous Training (CT) and Continuous Monitoring are not used, but Continuous Integration and Continuous Deployment are used. |

The Four Pillars of MLOps

These four critical pillars support any agile MLOps solution for problematic situations to deliver machine learning applications in a production environment safely.

The Fifth Pillar of MLOps

Production Model Management: To ensure ML models’ consistency and meet all business requirements at scale, a logical, easy-to-follow Model Management method is essential. Though optional, this paradigm streamlines end-to-end model training, packaging, validation, deployment, and monitoring to ensure consistency.

With Production Model Management, organizations can:

- Proactively address common business issues (such as regulatory compliance).

- Enable the sustainable tracking of data, models, code, and model versioning.

- Package and deliver models in reusable configurations.

Model Lifecycle Management: MLOps simplifies production model lifecycle management by automating troubleshooting and triage, champion/challenger gating, and hot-swap model approvals. It produces a secure workflow that efficiently manages your models’ lifecycle as the number of models in production grows exponentially.

The key actions include:

- Champion/ challenger model gating introduces any new model (aka ‘challenger’) by initially running it in production and measuring its performance in comparison to its predecessor (aka ‘champion’) for a defined period to determine its worth to outperform its predecessor in terms of quality and model stability before becoming completely automated.

- Troubleshooting and Triage are used to monitor and rectify suspicious or poorly performing areas of the model.

- Model approval is designed to minimize risks associated with model deployment ensuring that all relevant business or technical stakeholders have signed off.

- Model update offers the ability to swap models without disrupting the production workflow, which is crucial for business continuity.

Production Model Governance: Organizations have to comply with CCPA and EU/UK GDPR before putting their machine learning models into production.

Organizations need to automate model lineage tracking (approvals, versions deployed, model interactions, updates, etc.).

MLOps offers enterprise-grade production model governance to deliver:

- Model version control

- Automated documentation

- Comprehensive lineage tracking and audit trails for the suite of production models

This helps minimize corporate and legal risks, maintain production pipeline transparency, and reduce/eliminate model bias.

Benefits of MLOps

Open Communication: MLOps helps merge machine learning workflows in a production environment to make data science and operations team collaborations frictionless.

It reduces bottlenecks formed by complicated and siloed ML models in development. MLOps-based systems establish dynamic and adaptable pipelines to enhance conventional DevOps systems to handle ever-changing, KPI-driven models.

Repeatable Workflows: MLOps allow automatic and streamlined workflow changes. A model can span through processes that accommodate data drifts without significant lags. MLOps consistently gauge and order model behavior and outcomes in real-time while streamlining iterations.

Governance / Regulatory Compliance: MLOps helps incentivize regulatory capacities and stringent policies. MLOps systems can reproduce models in compliance and accordance with original standards. As consequent pipelines and models evolve, your systems play by the rule book.

Focused Feedback: MLOps provides sophisticated monitoring capabilities, data drift visualizations, and data metrics detection over the model lifecycle to ensure high accuracy over time.

MLOps detects anomalies in machine learning development using analytics and alerting mechanisms to help engineers quickly understand the severity and act upon them promptly.

Reducing Bias: MLOps can prevent development biases that can lead to misrepresenting user requirements or subjecting the company to legal scrutiny. MLOps systems ensure that data reports do not have unreliable information. MLOps support the creation of dynamic systems that do not get pigeonholed in their reporting.

MLOps boosts ML models’ credibility, reliability, and productivity in production to reduce system, resource, or human bias.

MLOps Target Users

Data Scientists: MLOps helps Data Scientists collaborate with their Ops peers and offload most of their daily model management tasks to discover new use cases, work on feature plans, and build in-depth business expertise.

Business Leaders and Executives: MLOps lets decision-makers scale organization-wide AI capabilities while tracking KPIs influencing outcomes.

Software Developers: A robust MLOps system provides a functional deployment and versioning system to developers that include:

- Clear and straightforward APIs (REST)

- Developer support for ML operations (documentation, examples, etc.)

- Versioning and lineage control for production models

- Portable Docker images

DevOps and Data Engineers: MLOps offers a one-stop solution for DevOps and data engineering teams to handle everything from testing and validation to updates and performance management. This allows value generation from and scaling opportunities for internal deployment and service monitoring.

The benefits include:

- No-code prediction.

- Anomaly and bias warnings.

- Accessible and optimized APIs.

- Swappable models with gating of choice automation, smooth transitioning, and 100% uptime.

Risk and Compliance Teams: An MLOps infrastructure improves the quality of oversight for complex projects. A reliable MLOps solution supports customizable governance workflow policies, process approvals, and alerts.

It promoted a system that can self-diagnose issues and notify relevant RM stakeholders, allowing for tighter enterprise control over projects. Additional MLOps management capabilities include predictions-over-time analysis and audit logging.

Final Thoughts

A well-planned MLOps strategy can lead to more efficiency, productivity, accuracy, and trusted models for the long road ahead. All it takes is embracing its potential systematically and in line with your production environment needs.

Radiant Digital’s MLOps experts can help to achieve this. Connect with us today to discuss the possibilities.